Auto-Research-In-Sleep GitHub: AI's Autonomous Leap in 2026

The pursuit of knowledge is an unending journey, often constrained by human limitations: time, cognitive load, and the sheer volume of information. What if research could continue, even while we sleep? This is no longer a futuristic fantasy but a tangible reality, spearheaded by projects like Auto-Research-In-Sleep GitHub. As of April 2026, autonomous AI research agents are rapidly evolving, promising to reshape how we approach scientific discovery, software development, and market analysis.

The concept of an AI system independently conducting research, formulating hypotheses, executing experiments, and analyzing results has moved from theoretical discussions to practical, open-source implementations. One such prominent project is ARIS (Auto-Research-In-Sleep), a lightweight, Markdown-only framework designed for autonomous machine learning research. It facilitates cross-model review loops, idea discovery, and experiment automation, operating without framework dependencies or vendor lock-in. ARIS is designed to work seamlessly with various large language model (LLM) agents, including Claude Code, Codex, OpenClaw, or any compatible LLM agent, making it a versatile tool for researchers and developers alike. You can explore its foundational repository at ARIS ⚔️ (Auto-Research-In-Sleep) on GitHub.

This article delves into the intricacies of ARIS and the broader implications of autonomous research in 2026, examining its capabilities, challenges, and future trajectory. We will analyze how such systems are not just automating tasks but fundamentally augmenting human intelligence, allowing for unprecedented rates of innovation. Understanding these advancements is vital for anyone operating in the fast-paced world of technology and business, where staying ahead requires a grasp of cutting-edge tools and methodologies. This evolution is happening concurrently with other significant shifts in the technology sector, including the evolving landscape of AI-driven tools, including projects like MCP Codex, which also explore the frontiers of automated intelligence.

Understanding Auto-Research-In-Sleep GitHub (ARIS)

ARIS, the Auto-Research-In-Sleep project available on GitHub, represents a significant stride in autonomous AI. Unlike heavy, framework-dependent systems, ARIS prides itself on a minimalist design. Its core philosophy revolves around using Markdown for defining skills and workflows, ensuring flexibility and ease of integration. This approach means that researchers are not tied to a specific programming language or complex API structure; instead, they can define research objectives and methodologies using a human-readable, widely adopted format.

The project's strength lies in its ability to orchestrate sophisticated research pipelines. Imagine defining a research question, providing initial data or parameters, and then allowing an AI agent to:

- Discover Ideas: Proactively identify relevant concepts, theories, and existing work.

- Formulate Hypotheses: Generate testable predictions based on discovered information.

- Design Experiments: Outline the steps and conditions necessary to validate or refute hypotheses.

- Automate Execution: Run simulations, interact with APIs, or even write code to perform experiments.

- Cross-Model Review Loops: Utilize multiple LLMs or AI agents to review each other's work, providing critical feedback and refinement, mimicking a peer-review process.

- Synthesize Results: Compile findings, generate reports, and even suggest next steps.

This entire process, from ideation to conclusion, can run with minimal human intervention, effectively performing 'research in sleep' from a human operator's perspective. It's akin to having a tireless research assistant working around the clock, exploring avenues that might otherwise be overlooked due to time constraints or cognitive biases.

The Markdown-Only Philosophy and LLM Integration

The choice of Markdown as the primary interface for ARIS is deliberate. It lowers the barrier to entry for researchers who may not be expert programmers but are familiar with structured text. Markdown files can define tasks, objectives, constraints, and even the expected output format for the LLM agents. This simplicity fosters a more natural interaction with the AI, allowing users to focus on the research problem rather than the technical overhead.

The agnostic nature of ARIS regarding the underlying LLM is another key differentiator. Whether a team prefers the coding capabilities of Claude Code, the general intelligence of OpenAI's models, or specialized agents like OpenClaw, ARIS can integrate them. This flexibility is crucial in 2026, as the LLM landscape continues to diversify, with new models offering unique strengths in different domains. This means users can swap out LLMs to find the best fit for a particular research task, optimizing for cost, speed, or accuracy without changing the core ARIS workflow.

Core Mechanics of Auto-Research-In-Sleep: How It Operates

The operational framework of ARIS is built on a series of iterative loops, designed to mimic and accelerate the scientific method. It's not a single script but an intelligent orchestration of tasks, feedback, and refinement.

Defining Research Pipelines

At the heart of ARIS is the concept of a research pipeline. Users define a topic, often using a command like /research-pipeline "Your Topic". This initial prompt sets the direction for the autonomous agents. The system then breaks down this broad topic into smaller, manageable sub-tasks. For instance, researching a new machine learning algorithm might involve steps like:

- Literature Review (

research-lit) - Hypothesis Generation

- Experiment Design

- Code Implementation

- Execution and Data Collection

- Analysis and Reporting

- Refinement and Iteration

Each of these steps can be executed by one or more LLM agents, with their outputs fed back into the system for review and subsequent action.

Autonomous Progression and Feedback Loops

A critical feature is the ability for the system to autonomously proceed (AUTO_PROCEED: true) through these steps. However, as some users have noted, this isn't always a flawless process. One GitHub issue highlights a common challenge: "自动化无效" (Automation Invalid), where the system, using a GLM-5 + MiniMAX 2.5 combination, frequently stops, waiting for input. This indicates that while autonomous, these systems still require robust error handling and contextual understanding from the LLMs to maintain continuous operation. The ability of the base model to interpret instructions and generate appropriate next steps is paramount.

"The promise of 'research in sleep' hinges on truly robust autonomous progression. When agents stall, it exposes the current limitations in contextual understanding and error recovery, even with advanced LLMs like GLM-5 and MiniMAX 2.5. Bridging these gaps is the next frontier for autonomous research platforms."

The feedback loops are where ARIS truly shines. After an LLM completes a task, another agent or even the same agent with a different prompt can review the output. This cross-model review helps identify errors, suggest improvements, and ensure the quality and accuracy of the generated research. This iterative refinement is a cornerstone of effective research, now accelerated by AI.

Key Components and Architecture of ARIS

The architecture of ARIS, while lightweight, is sophisticated in its design, leveraging several key components to enable its autonomous capabilities:

- Markdown Skill Definitions: These are the instructions and templates that guide the LLMs. They define what a 'skill' is (e.g., 'perform web search', 'summarize paper', 'write code snippet') and how it should be executed.

- LLM Agent Orchestrator: This component manages the interaction with various LLMs. It sends prompts, receives responses, and directs the flow of information between different stages of the research pipeline.

- Memory Module: Essential for maintaining context throughout a long research process. This module stores intermediate results, discovered facts, and previous decisions, allowing the LLMs to build upon prior work and avoid redundant efforts.

- Tool Integration: While ARIS is Markdown-only for skills, it integrates with external tools for practical execution. This includes web search capabilities, code interpreters, and potentially data analysis libraries.

Challenges in Tool Integration: The Websearch Conundrum

Tool integration, especially web search, is a common point of friction. As one user reported in a GitHub issue, "research-lit这一步websearch有点问题" (research-lit step websearch has a problem), where the web search component returned "did 0 searches in 2s." This issue often stems from API misconfigurations or limitations of the specific LLM agent (e.g., Claude Code with GLM4.7 via cc switch) in invoking external web search functionalities. Reliable access to up-to-date information through web search is critical for any autonomous research system, and ensuring seamless API integration is an ongoing development challenge.

Use Cases and Applications of Autonomous Research

The potential applications of Auto-Research-In-Sleep GitHub extend across numerous domains, from academic research to commercial product development. In 2026, we are seeing these systems move beyond theoretical demonstrations to practical, value-generating tools.

Accelerating Scientific Discovery

For scientists, ARIS-like systems can dramatically accelerate the initial phases of research. Imagine an AI sifting through millions of scientific papers, identifying emerging trends, correlating disparate findings, and even proposing novel experimental designs. This allows human scientists to focus on higher-level problem solving, hypothesis refinement, and interpreting complex results, rather than the tedious work of literature review and preliminary experimentation.

Automated Software Engineering and ML Experimentation

In software development and machine learning, autonomous research systems are proving invaluable. Andrej Karpathy's AutoResearch project, for instance, demonstrated the capability to run 50 AI experiments overnight without human input on a single GPU. This showcases the power of autonomous experiment loops, a design pattern that applies broadly beyond just ML training. ARIS similarly enables developers to define parameters for code generation, bug hunting, or testing different model architectures, allowing the system to iterate through possibilities while engineers focus on architectural design and strategic planning.

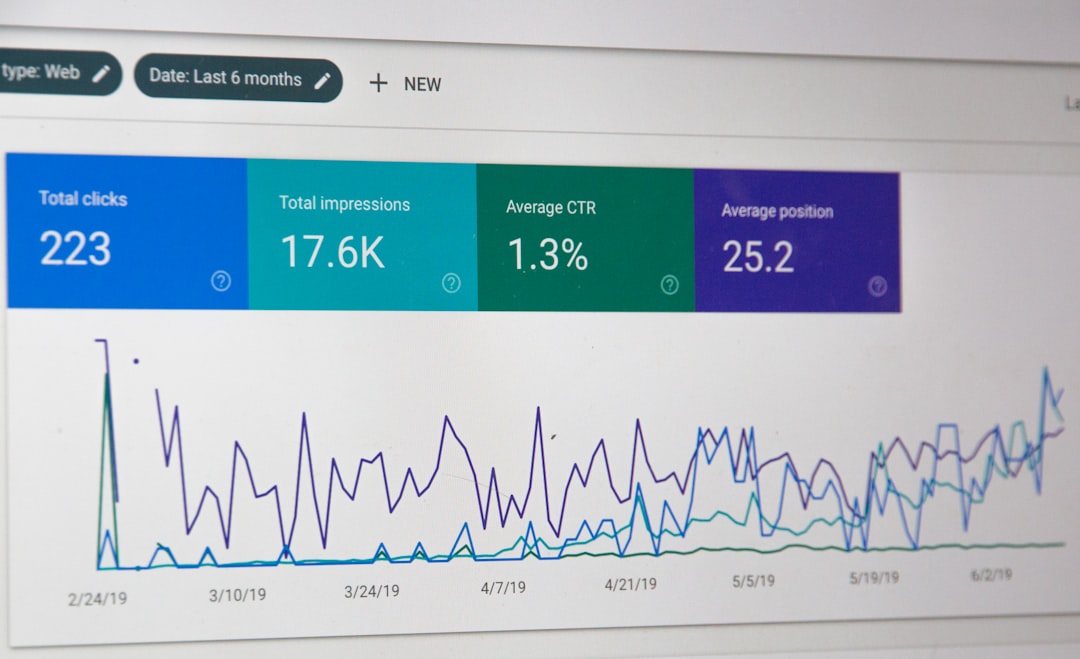

Market Analysis and Business Intelligence

Businesses can leverage autonomous research for rapid market analysis, competitor intelligence, and trend forecasting. An ARIS agent could continuously monitor news, social media, and industry reports, identifying shifts in consumer sentiment, emerging technologies, or regulatory changes. This provides businesses with a constantly updated, data-backed understanding of their operating environment, informing strategic decisions and enabling agile responses to market dynamics. This capability is becoming as fundamental as optimizing business processes, much like understanding applicant funnel dynamics with a comprehensive guide for 2026.

Content Generation and Knowledge Management

Beyond core research, ARIS can also assist in generating comprehensive reports, summaries, and educational content based on its findings. This could streamline knowledge transfer within organizations or even power advanced personalized learning platforms, adapting content based on individual learning needs and research interests.

Challenges and Limitations in 2026

While the promise of autonomous research is immense, several challenges and limitations persist as of April 2026.

Reliability and Hallucination

LLMs, despite their advancements, are still prone to 'hallucinations' – generating factually incorrect but plausible-sounding information. In autonomous research, this can lead to erroneous conclusions or wasted computational resources on invalid experiments. Robust validation mechanisms and human oversight remain essential, especially for high-stakes applications.

Computational Cost and Efficiency

Running complex research pipelines with multiple LLM interactions, especially those involving extensive web searches or code execution, can be computationally intensive and costly. Optimizing the efficiency of these agents and developing smarter resource allocation strategies are ongoing areas of development. The choice of LLM (e.g., using a more compact model for certain tasks) can also impact cost and speed.

Platform-Specific Challenges: Windows Workflow

Deployment and setup can also present hurdles. A user inquired about "Windows 系统如何使用工作流3进行论文的撰写" (How to use workflow 3 for thesis writing on Windows system). This highlights the need for clear, comprehensive documentation and robust cross-platform compatibility. While Linux environments are often favored for development, broad adoption requires seamless operation on popular operating systems like Windows, which may involve specific configurations or workarounds.

Ethical Considerations and Bias

Autonomous research systems inherit the biases present in their training data. If the LLMs are trained on biased datasets, their research outputs may reflect and even amplify those biases, leading to skewed conclusions or unfair recommendations. Ethical guidelines for AI research, transparency in data sources, and continuous monitoring for bias are critical for responsible deployment.

The Role of LLMs in Autonomous Research

The capabilities of autonomous research systems like ARIS are inextricably linked to the advancements in Large Language Models. As of April 2026, we've seen a rapid proliferation of powerful LLMs, each with distinct strengths.

Diverse LLM Agents

- Claude Code/OpenClaw: These models excel in code generation, debugging, and understanding complex programming logic. They are crucial for automating experiment implementation and software development tasks within ARIS.

- OpenAI's Codex: A pioneer in code-aware LLMs, Codex (and its successors) provides strong capabilities for translating natural language instructions into executable code, forming the backbone of many automated programming tasks.

- GLM-5 and MiniMAX 2.5: These represent a class of powerful, often multi-modal, LLMs capable of complex reasoning, synthesis, and understanding diverse forms of information. Their integration, as seen in the GitHub issue, demonstrates the ambition to leverage state-of-the-art models for advanced reasoning tasks, though they still present challenges in achieving full autonomy without intervention.

- GLM4.7: Another example of a highly capable model, its use in conjunction with Claude Code via a 'cc switch' for web search highlights the modular nature of ARIS and the potential for combining different LLMs to achieve specific functionalities, despite potential API-related hurdles.

The ability of ARIS to work with "any LLM agent" means that as new, more capable models emerge, the system can adapt and upgrade its underlying intelligence without a complete architectural overhaul. This future-proofing is a significant advantage in the fast-moving AI landscape.

Comparing ARIS with Other Autonomous Research Paradigms

While ARIS is a notable open-source project, it exists within a broader ecosystem of autonomous research endeavors. Understanding its position relative to others provides valuable context.

| Feature/Project | ARIS (Auto-Research-In-Sleep) | Andrej Karpathy's AutoResearch | Proprietary AI Lab Frameworks |

|---|---|---|---|

| Primary Focus | Autonomous ML research, idea discovery, experiment automation via LLMs. | Autonomous ML experimentation, focusing on iterative loops and GPU utilization. | Broad scientific discovery, drug design, material science, often with specialized hardware and data. |

| Interface/Methodology | Lightweight, Markdown-only skills, LLM-agnostic. | Python scripts defining experiment loops, focused on ML training. | Custom APIs, specialized domain-specific languages, GUI tools. |

| Lock-in/Flexibility | No framework, no lock-in; works with any LLM agent. Highly flexible. | Python-based, adaptable design pattern. | Often proprietary, significant vendor or platform lock-in. |

| Community/Accessibility | Open-source on GitHub, community-driven development. Accessible to individuals and small teams. | Open-source principles demonstrated, focus on design pattern. | Closed-source, limited access, high cost of entry. |

| Typical Use Cases | Early-stage research, hypothesis generation, literature review, code prototyping. | Hyperparameter tuning, model architecture search, repetitive ML experiments. | Large-scale, complex R&D projects requiring deep domain expertise and resources. |

ARIS distinguishes itself through its open-source nature, Markdown-centric approach, and LLM agnosticism, making it highly accessible and adaptable. While Karpathy's AutoResearch highlights the power of autonomous experimentation loops, ARIS broadens the scope to encompass the entire research lifecycle, from idea generation to cross-model review. Proprietary frameworks, on the other hand, often offer deeper integration with specialized hardware and datasets but come with significant cost and lack of transparency.

Setting Up and Troubleshooting Auto-Research-In-Sleep

Getting started with ARIS and similar projects requires a basic understanding of GitHub, Python (for orchestration, even if skills are Markdown), and API keys for chosen LLMs. The setup process typically involves cloning the repository, installing dependencies, and configuring environment variables for API access.

Addressing Common Issues

As highlighted by the GitHub issues, common challenges include:

- Automation Stalls: When the

AUTO_PROCEED: trueflag doesn't prevent the system from pausing, it often indicates an LLM's inability to generate a coherent next step or a failure to correctly interpret the previous output. Debugging involves examining the LLM's raw output and refining the Markdown skill prompts. - Web Search Failures: The "did 0 searches in 2s" error usually points to an issue with the web search API integration or the LLM's specific configuration for invoking external tools. Verifying API keys, checking network connectivity, and ensuring the LLM has the correct permissions and format to call the search tool are essential steps.

- Platform Compatibility: For users on Windows, specific environment setups or dependency installations might be required that differ from Linux-based instructions. Consulting the project's issue tracker or community forums is often beneficial for platform-specific guidance.

The active community around open-source projects like ARIS on GitHub is a valuable resource for troubleshooting. Users often share solutions, workarounds, and best practices, contributing to the project's overall robustness.

The Future of Autonomous Research in 2026 and Beyond

The trajectory for autonomous research systems like those found under the Auto-Research-In-Sleep GitHub umbrella is steep and promising. As of April 2026, we are witnessing a paradigm shift in how research is conducted, and several key trends are shaping its future:

Enhanced LLM Capabilities

Future LLMs will possess even greater reasoning abilities, longer context windows, and improved factual accuracy, significantly reducing instances of hallucination and automation stalls. Multi-modal LLMs will become standard, allowing agents to understand and generate research across text, images, video, and scientific data formats seamlessly.

Specialized Agent Ecosystems

We can expect the emergence of highly specialized AI agents, each excelling in a particular aspect of research – a 'chemistry agent,' a 'software architecture agent,' or a 'legal research agent.' ARIS's LLM-agnostic design makes it perfectly positioned to integrate these specialized agents as they become available, forming powerful, customized research teams.

Human-AI Collaboration Refined

The future isn't about AI replacing human researchers entirely but enhancing their capabilities. Interfaces will become more intuitive, allowing humans to easily inject their insights, course-correct agents, and validate findings at critical junctures. This synergistic relationship will allow for tackling problems of unprecedented complexity and scale. Understanding the velocity of these advancements is akin to evaluating the intangible reinvestment velocity of these cutting-edge AI projects, where the returns are not just monetary but in knowledge and innovation.

Ethical AI and Governance

As autonomous research becomes more powerful, the need for robust ethical frameworks and governance will intensify. Ensuring transparency, accountability, and fairness in AI-driven discovery will be paramount. Open-source projects like ARIS play a crucial role here, fostering community scrutiny and collaborative development of ethical guidelines.

To further explore the advancements and practical implementations of such technologies, one might consider exploring resources like Auto-Research-In-Sleep GitHub: Autonomous AI Research in 2026, which offers deeper insights into the specific project and its ongoing development.

Conclusion

The Auto-Research-In-Sleep GitHub project, ARIS, stands as a testament to the rapid progress in autonomous AI research. In April 2026, it offers a glimpse into a future where the constraints of human time and effort in research are significantly mitigated. By leveraging lightweight Markdown skills and flexible LLM integration, ARIS empowers researchers, developers, and businesses to accelerate discovery, automate experimentation, and gain insights at an unprecedented pace.

While challenges related to automation reliability, web search integration, and platform compatibility persist, the active development and open-source nature of projects like ARIS mean these hurdles are being addressed collaboratively. The shift towards autonomous research is not just about efficiency; it's about fundamentally expanding the scope of what's possible in scientific and technological innovation. As LLMs continue to evolve and ethical frameworks mature, the dream of truly 'auto-research-in-sleep' will move closer to a fully realized, transformative reality for all industries.

SaaS Metrics

SaaS Metrics